Amazon.com: Chip Clips, Chip Clips Bag Clips Food Clips, Bag Clips for Food, Chip Bag Clip, Food Clips, PVC-Coated Clips for Food Packages, Paper Clips, Clothes Pin(Mixed Colors 30 PCs) : Office

Romain Beaumont on Twitter: "@AccountForAI and I trained a better multilingual encoder aligned with openai clip vit-l/14 image encoder. https://t.co/xTgpUUWG9Z 1/6 https://t.co/ag1SfCeJJj" / Twitter

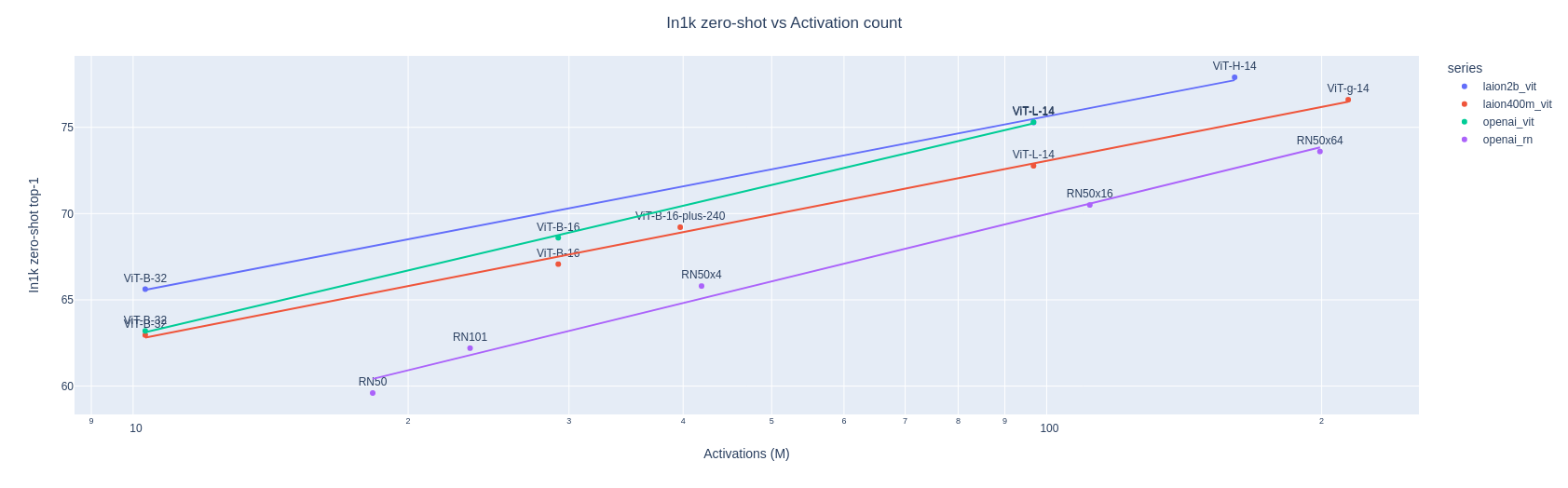

apolinário (multimodal.art) on Twitter: "Yesterday OpenCLIP released the first LAION-2B trained perceptor! a ViT-B/32 CLIP that suprasses OpenAI's ViT-B/32 quite significantly: https://t.co/X4vgW4mVCY https://t.co/RLMl4xvTlj" / Twitter